Often, surfing on networking forums and blogs, I find posts by people asking how to setup dual WAN connection and load-balancing on a single box. They are looking for solutions to have the LAN connected to Internet, VoIP traffic with an acceptable QoS level, most of them have to handle VPN tunnels and DMZ too!

Of course, a good network architect would never consider such solution for this scenario, but when budget is low (or not exists at all) there are not many ways to have all these things running!

In this catastrophic scenario Policy-based routing (PBR) can save us!

Here you can find a little PBR based solution and the GNS3 Lab.

Scenario

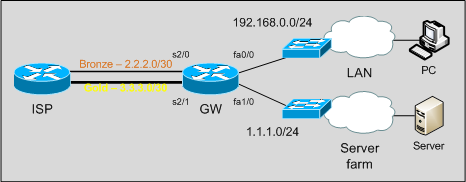

We have a router connected to the ISP with two WAN connections:

– a Bronze link, with little bandwidth, on which we have a /30 subnet;

– a Gold link, with good performances, on which we have a /30 point-to-point subnet and an additional /24 routed subnet.

Note that ISP does not accept inbound traffic coming from a subnet that is not the one routed through the ingress interface: for example, we can’t send traffic from 1.1.1.0/24 out the Bronze link. One subnet, one link.

Objectives

Our goals are:

– users on the LAN need access to Internet;

– mission critical traffic has to go out through the Gold link;

– our servers have to be reachable from the outside on their public IP addresses.

For the sake of simplicity, in our example and lab mission critical traffic will be telnet traffic. In real life it can be RTP, database or other important traffic.

At first sight, we can see there is no way to achieve Server farm fault tolerance: if the Gold link goes down, we can’t do anything to keep the subnet reachable. Ok, we can just tell the CIO to get a bigger budget for the network!

Solution

On this topology we have 5 interesting traffic flows:

LAN -> Mission critical services [Gold] LAN -> WAN [Bronze] LAN -> Server farm ServerFarm -> WAN [Gold] ServerFarm -> LAN

Traffic coming from the LAN towards WAN or Mission Critical Services needs to be translated by NAT too: remember that in the order of operations a packet is first routed, then translated, so actually we can focus just on routing packets out the box in the right way. We will take care about NAT later.

Standard routing just forwards packets on the basis of the destination network; it doesn’t care about Layer 4 properties nor source IP addresses. How can we route traffic on the basis of other elements, such as TCP destination port? If we want to route packets in the expected way we need to deploy Policy Based Routing(PBR). PBR, indeed, can take decisions on the basis of a lot of parameters: source address, destination ports, QoS marking.

Let’s proceed in an orderly fashion.

As first, this is the starting config:

interface Serial2/0 description Bronze ip address 2.2.2.2 255.255.255.252 ! interface Serial2/1 description Gold ip address 3.3.3.2 255.255.255.252 ! interface FastEthernet0/0 description LAN ip address 192.168.0.1 255.255.255.0 ! interface FastEthernet1/0 description ServerFarm ip address 1.1.1.1 255.255.255.0

We just have WAN interfaces up and running and the fastethernet interfaces pointing to the right subnet.

We set default route out to GW through the Bronze link:

ip route 0.0.0.0 0.0.0.0 Serial2/0

With this simple configuration we have already 3 of 5 flows routed in the right way:

– LAN to WAN

– LAN to ServerFarm

– ServerFarm to LAN

Now we need to start our PBR configuration! To do this, we need to create route-maps and then apply them to the ingress interfaces on which policy-based routed packets will enter.

As said, PBR can make decisions on the basis of a lot of elements, such as source address and Layer 4 properties. So, let’s define an access list to match Mission Critical Services (telnet in our example):

ip access-list extended GoldServices deny ip any 1.1.1.0 0.0.0.255 permit tcp any any eq telnet deny ip any any

The access-list just matches telnet traffic that is not directed to our Server farm.

Now we have to define a route-map matching Mission critical traffic and sending it out the Gold link…

route-map PBR_LAN permit 10 match ip address GoldServices set interface Serial2/1 Serial2/0

… then we apply it to the LAN facing interface:

interface FastEthernet0/0 description LAN ip policy route-map PBR_LAN

If a packet doesn’t match any route-map match statement it’s routed on the basis of the standard routing table (so, through the Bronze link).

Note that we used two interface names in the set interface command: if S2/1 is down, IOS will use S2/0, so we have a small level of redundancy and WAN side fault-tolerance for Mission Critical Traffic. We can achieve fault-tolerance for LAN to WAN traffic too by adding an higher metric default route:

ip route 0.0.0.0 0.0.0.0 Serial2/1 10

Now, LAN to Mission Critical Services is OK; we need to do the same for Server farm traffic:

ip access-list extended ServerFarm-To-WAN deny ip 1.1.1.0 0.0.0.255 192.168.0.0 0.0.0.255 permit ip any any ! route-map PBR_ServerFarm permit 10 match ip address ServerFarm-To-WAN set interface Serial2/1 ! interface FastEthernet1/0 description ServerFarm ip policy route-map PBR_ServerFarm

Here our access-list does match traffic coming from the server farm to destinations outside the LAN: of course we don’t want to route ServerFarm-to-LAN traffic though the WAN! Unfortunately we can’t add a second interface to the set interface command: our ISP will not accept traffic coming from 1.1.1.0 on the bronze link.

Routing is OK, let’s take care about NAT.

We have 1 inside interface (the LAN facing fastethernet) and 2 outside interfaces:

interface FastEthernet0/0 description LAN ip nat inside ! interface Serial2/0 description Bronze ip nat outside ! interface Serial2/1 description Gold ip nat outside

Here we don’t have to think about “policy-based NAT”: when NATting, policies have already been applied, and packets routed accordingly. We just have to translate them in the right way!

As first define the pool to use when translating Gold packets:

ip nat pool LAN-to-Gold 1.1.1.20 1.1.1.20 netmask 255.255.255.0

Then define 2 new route-maps, used in the ip nat inside source command:

route-map NAT_Gold permit 10 match ip address LAN match interface Serial2/1 ! route-map NAT_Bronze permit 10 match ip address LAN match interface Serial2/0

Finally:

ip nat inside source route-map NAT_Gold pool LAN-to-Gold overload ip nat inside source route-map NAT_Bronze interface Serial2/0 overload

Both route-maps does match 192.168.0.0/24 traffic, but the first (NAT_Gold) takes care only of those packets routed through the s2/1 interface, while the second (NAT_Bronze) of packets routed through the Bronze interface. In this way we are sure the right inside global IP address will be used when translating.

Some tests… on the GNS3 Lab PC and Server are two routers:

traceroute and telnet from PC to “internet” (4.4.4.4 is a ISP loopback):

PC#traceroute 4.4.4.4 n Type escape sequence to abort. Tracing the route to 4.4.4.4 1 192.168.0.1 68 msec 40 msec 16 msec 2 2.2.2.1 120 msec * 52 msec PC# PC#telnet 4.4.4.4 Trying 4.4.4.4 ... Open User Access Verification Password:

On the gateway, traceroute traffic is translated to 2.2.2.2 (Bronze link) while telnet to 1.1.1.20 (Gold link):

GW#show ip nat translations Pro Inside global Inside local Outside local Outside global tcp 1.1.1.20:46878 192.168.0.10:46878 4.4.4.4:23 4.4.4.4:23 udp 2.2.2.2:49178 192.168.0.10:49178 4.4.4.4:33437 4.4.4.4:33437 udp 2.2.2.2:49179 192.168.0.10:49179 4.4.4.4:33438 4.4.4.4:33438 udp 2.2.2.2:49180 192.168.0.10:49180 4.4.4.4:33439 4.4.4.4:33439

A traceroute from the server, going through the Gold link:

Server#traceroute 4.4.4.4 n Type escape sequence to abort. Tracing the route to 4.4.4.4 1 1.1.1.1 72 msec 52 msec 12 msec 2 3.3.3.1 68 msec * 88 msec Server#

You can download GNS3-Lab from GNS3-Labs.com: http://www.gns3-labs.com/2009/04/14/gns3-topology-dual-wan-connection-on-cisco-with-policy-based-routing-pbr/

Latest posts by Pier Carlo Chiodi (see all)

- Good MANRS for IXPs route servers made easier - 11 December 2020

- Route server feature-rich and automatic configuration - 13 February 2017

- Large BGP Communities playground - 15 September 2016

[…] Pierky’s lab at his site: Dual WAN connection on Cisco with Policy-based routing (PBR) 1 […]

Thanks a lot, saying that you have the budget how can we make the server’s farm fault tolerance?

Hi Ramy, fault tolerant data center design requires a deep study and a deep knowledge about the specific network.

You can start dual-homing servers to two access switches, with a VLan spanning both switches, each of which dual-homed to the higher level network layer (as in the right part of the topology you can find here).

You should have upstream Internet access with two different ISPs, each announcing your own IP subnets.

These are just some guidelines; I suggest you to read good Cisco Solution Reference Network Design Guides about Data Center design.

Hello Pierky, thank you, your reply is information rich as usual, I have a client with 3 different ISP lines, Internet router is a 2811, adv. sec with 3 ADSL HWICs, currently part of the deployment I’ve configured route maps through the router to appropriately route specific servers and hosts through corresponding WAN

My question is, is there a way to utilize all the 3 links, publish email servers through one, vpn through another, regular web usage through the third link and also have internet redundancy at least for the email servers publish?

The design guides are very serious, it always take me through advanced networking stuff, I work for a place which serves SMB sized places, so i usually don’t get to deploy campus or such, anyways I find your guidelines brilliant and so helpful

Thank you

Hi Ramy,

I think you can’t achieve what you need with a simple IP load-balance or failover.

Actually you have a 1-to-1 mapping between services (mail, vpn, web) and ISPs, so each server has an IP address from the mapped ISP subnet:

mail – ISP1 – 1.0.0.1/24

vpn – ISP2 – 2.0.0.1/24

web – ISP3 – 3.0.0.1/24

If ISP1 ADSL goes down, you lose connectivity to the only ISP that can route the mail server IP address. Other ISPs can’t route that subnet.

Unfortunatly to get a failover solution is not a simple matter in this scenario.

You have to mix NAT/PAT with a multiple-WAN firewall.

I can point you to this URL: http://www.shorewall.net/MultiISP.html#Complete

I did not read the full page and I never tested that solution, sorry.

Bye,

Ramy, I suggest you to take a look at this: http://www.nil.com/ipcorner/SOHO_Servers/

You can find a NAT-based solution.

Bye

Hi there,

First of all really appreciated your effort!! My knowledge in cisco is very limited, but I guess i’m bit familiar with fortinet, juniper and procurve.

I have a situation same like this, I will give an brief idea. this is for one of my client, they have two leased line connection and using 2801 router it connected to two lan networks(say for 192.168.1.0, 192…2.0/24) they just wanna divide the complete traffic based on internal network. one of the internal(1.0/24) network traffic should go through wan1(complete traffic, no matter what it is) and other one has(2.0/24) to go through wan2

It is very easy to do in fortinet and juniper. but i’m bit confused in cisco now.

can you please assist me for this? your immediate reply would be highly appreciated!!!

Thanks in advance!!!

cheers,

Hi moh, I wrote a little post about your issue: Two LAN distributed on two WAN connections using Policy Based Routing (PBR).

Bye

[…] to Scotland, but I don’t want to leave unanswered a question that Moh asked me on my previous Dual WAN connection on Cisco with Policy-based routing (PBR) […]

Hi Guyz,

I have struck in one scenario. It will be appreciated if u will guide me in designing a solution.

Our client(call center) has two Internet(Bandwidth connections), one provide by us which is dedicated and other one is DSL(shared), got from another ISP.

Now, they want to utilize both links in the following way:

(1) Client wants to route “voice traffic” through our dedicated link.

(2) Client wants to route “Internet traffic” through DSL link.

Thanks,

Hi Guyz,

I have struck in one scenario. It will be appreciated if u will guide me in designing a solution.

Our client(call center) has two Internet(Bandwidth connections), one provide by us which is dedicated and other one is DSL(shared), got from another ISP.

Now, they want to utilize both links in the following way:

(1) Client wants to route “voice traffic” through our dedicated link.

(2) Client wants to route “Internet traffic” through DSL link.

Thanks,

Hi Kashif,

on this post‘s comments Michael wrote a similar question. Take a look at the post, it may be useful.

Bye

Thanks Pierky.

Hello Pierky,

Great article. Gratsi for your blog, I really like to read it.

I do have a question for you… is there a way to do policy routing after the nat translation? For instance the same internal network has two WAN links, each with their own IP scope. I have static nat translations for public IPs to lan IPs on both outside networks translating to the same internal hosts. The idea is for primary and backup IPs over a primary and backup circuits working simultaneously. Since I can’t match the ACL based on source or destination before the nat, how might I go about this? Default route to a loopback adapter with a policy route on the loopback interface? Or is my only course to have another router outside the nat that makes policy routes based on outside source?

Thanks! -Cheers, Peter.

Hi Peter, nice to hear my blog it’s useful to you!

I think this post by Ivan Pepelnjak may be the solution for your scenario: Servers in Small Site Multi-homing. Here you can also find other posts about small site multihoming, where you have two upstream IPSs but no public Autonomous System or BGP setup.

Bye,

Pierky

I have a similar scenario that I would like to implement.

Multiple WAN links:

Example:

ADSL1

ADSL2

ADSL3

put with 3 wics on a 2600 router.

Then I have the LAN Side 172.16.0.0/16

Each ADSL Link has a /28 public IP Range assigned to it.

I need to do static NAT 1-1 of the public ip (13 used for each adsl link to 13 clients) in the range 172.16.1.1/16

How can I do to configure the single router to let go out specific ip to specific wan link?

example:

ADSL1 – public range 8.8.8.0/28 –> (example) 8.8.8.7 1-1 to 172.16.1.7

etc. etc.

Thanks

pls how can i create a web page on a router and then test http traffic from another router so as to verify if the pbr is working perfectly ok this is bcos i am practising for exam using GNS3 and you know i cant simulate a PC i have to use a router pls i need a reply

Hi,

you can use two routers as PC and server; use telnet instead of http. You may configure your “server” (a router) to listen on telnet port by configuring the “line vty 0 4” to accept connection. If it works for telnet it works for HTTP too – provided a correct configuration! 🙂

Pierky

hi, ur site has been helpful to me for quite a long time now.recently am plague with this issue.

I av a primary link and a backup link in a branch and need telnet traffic to pass 2ru ISP1 and http/https traffic to pass 2ru ISP2 and the traffic to pass 2ru the ACTIVE link in case any of the isp goes down.

The issue is that i do not NAT on my router but instead have a static route 2ru the isps to my HEAD-OFFICE.

And i also have a tunnel from my branch to the HEAD-OFFICE.bellow is a sample config of my branch router.

hostname BRANCH1

!

boot-start-marker

boot-end-marker

!

!

no aaa new-model

!

resource policy

!

memory-size iomem 5

ip subnet-zero

!

!

ip cef

no ip dhcp use vrf connected

!

!

no ip ips deny-action ips-interface

!

no ftp-server write-enable

!

!

!

!

!

!

!

!

!

!

!

!

!

!

!

!

no crypto isakmp ccm

!

!

!

!

interface Tunnel1

ip address 172.16.1.1 255.255.255.0

shutdown

tunnel source Ethernet0/1

tunnel destination 10.2.1.1

!

interface Tunnel2

ip address 172.16.2.1 255.255.255.0

tunnel source Ethernet0/2

tunnel destination 10.3.1.1

!

interface Ethernet0/0

des LAN

ip address 192.168.1.1 255.255.255.0

!

interface Ethernet0/1

des WAN LINK TO ISP1

ip address 10.1.1.1 255.255.255.0

half-duplex

!

interface Ethernet0/2

des WAN LINK TO ISP2

ip address 10.1.2.1 255.255.255.0

half-duplex

!

interface Ethernet0/3

no ip address

shutdown

half-duplex

!

router eigrp 111

network 172.16.0.0

network 192.168.1.0

no auto-summary

!

ip http server

no ip http secure-server

ip classless

ip route 10.2.1.0 255.255.255.0 10.1.1.2

ip route 10.3.1.0 255.255.255.0 10.1.2.2

!

!

!

!

!

!

control-plane

!

!

!

!

!

!

!

!

!

!

line con 0

line aux 0

line vty 0 4

login

!

!

end

Thanks in advance.

Thanks for the excellent explanation of Policy-based routing.

It helped me to configure PBR on a FortiGate firewall (with routing capabilities) which is completely unlike a Cisco IOS device, but the underlying principles are the same.

This may be a long shot because the blog is old but i am stuck with a little problem, hopefully someone here can help!

I want to setup dual WAN links on a 891 router but there is a caveat…

A. I only have one internal LAN

B. One ISP has to be used for all traffic leaving the LAN, Web, SSH, Etc…

C. The only purpose of the second ISP is for an IPSec tunnel coming from the Outside (www)

Ascii looks like this:

—- WAN ISP1 > All Trafic from Lan

|

LAN (172.16.30.0/24)

|

—- WAN ISP2 < Incoming IPSec Tunnel

I have tried a bunch of stuff with PRB and NAT and no bueno…

Thanks for sharing your thoughts about network installation.

Regards

Hi there, it’s nice to see lab like this but I’m curious, what if i want to add inter-vlan in your lab how can i accomplish having dual ISP and at the same time having inter-vlan works on LAN.

to elaborate what i mean company E have 2 ISP for load-balancing and fail-over. in their LAN they are using INTER-VLAN for every dept, PACL is created to avoid Dept. A from accessing Dept. B. same thing with other dept.

2 ISP

Router—-DMZ

|

ether channel

|

L3 Switch

|

Workstation

appreciate if you could help me for this scenario using GNS3. Thanks in advance!

[…] to Scotland, but I don’t want to leave unanswered a question that Moh asked me on my previous Dual WAN connection on Cisco with Policy-based routing (PBR) […]

Dear Pierky,

In defining route-maps for the ip nat inside, the “match ip address LAN”, “LAN” is not defined. Can you please elaborate little bit on it.